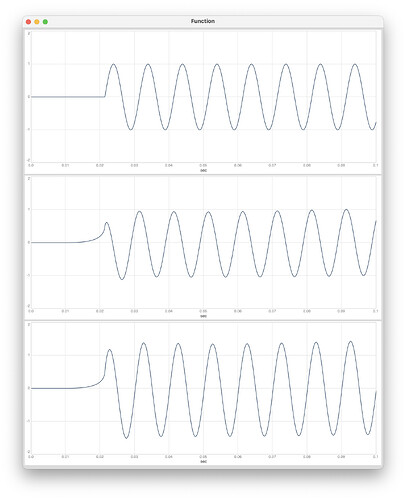

I had a go at converting a schroeder reverb I found on the max forums, but it sounds like repeated definitions of the allpass are sharing the same buffer or something (it’s giving a weird pitch shifter effect)

anyone have any tips?

(

~genDef = DynGenDef(\funcs,

"

function wrap(x, low, high)local(range, result)

(

range = high - low;

result = range != 0 ? (x - floor((x - low) / range) * range) : low;

result;

);

function mstosamps(ms)( srate * ms * 0.001 );

function linear_interp(x, y, a) ( x + a * (y-x));

function lpf_op_simple(in, damp) local(init, prev, lpf)(

lpf = linear_interp(in, prev, damp);

prev = lpf;

lpf;

);

function allpass_delay(in, gain, delay_samps, buf) local(init, ptr, max_delay, read_pos, frac, a, b, tap, sig, out)

(

!init ? (ptr = 0; init = 1;);

max_delay = mstosamps(3000);

read_pos = wrap(ptr - delay_samps, 0, max_delay);

frac = read_pos - floor(read_pos);

a = wrap(floor(read_pos), 0, max_delay);

b = wrap(a + 1, 0, max_delay);

tap = linear_interp(buf[a|0], buf[b|0], frac);

sig = (in - tap) * gain;

out = tap + sig;

buf[ptr] = sig;

ptr = wrap(ptr + 1, 0, max_delay);

out;

);

function fbcomb(in, gain, delay_samps, damp, buf) local(init, ptr, read_pos, max_delay, frac, a, b, tap, out)

(

!init ? (ptr = 0; init = 1;);

max_delay = mstosamps(3000);

read_pos = wrap(ptr - delay_samps, 0, max_delay);

frac = read_pos - floor(read_pos);

a = wrap(floor(read_pos), 0, max_delay);

b = wrap(a + 1, 0, max_delay);

tap = linear_interp(buf[a|0], buf[b|0], frac);

tap = lpf_op_simple(tap, damp);

out = (in - tap) * gain;

buf[ptr] = out;

ptr = wrap(ptr+1, 0, max_delay);

out;

);

size=in1; damp=in2;

ap1_buf[0]+=0;

ap2_buf[0]+=0;

ap3_buf[0]+=0;

sig = lpf_op_simple(in0, damp);

ap1 = allpass_delay(sig, 0.7, 347 * size, ap1_buf);

ap2 = allpass_delay(ap1, 0.7, 113 * size, ap2_buf);

ap3 = allpass_delay(ap2, 0.7, 370 * size, ap3_buf);

sig = ap3;

x1_buf[0]+=0;

x2_buf[0]+=0;

x3_buf[0]+=0;

x4_buf[0]+=0;

x1 = fbcomb(sig, 0.773, 1687, damp, x1_buf);

x2 = fbcomb(sig, 0.802, 1601, damp, x2_buf);

x3 = fbcomb(sig, 0.753, 2053, damp, x3_buf);

x4 = fbcomb(sig, 0.733, 2251, damp, x4_buf);

s1 = x1 + x3;

s2 = x2 + x4;

o1 = s1 + s2;

o2 = ((s1 + s2) * -1);

o3 = ((s1 - s2) * -1);

o4 = s1 - s2;

left=o1+o3;

right=o2+o4;

out0=left;

out1=right;

"

).send;

Ndef(\schroeder).clear;

Ndef(\schroeder, {

var test = Decay.ar(Impulse.ar(1), 0.25, LFCub.ar(1200, 0, 0.1));

var sig = DynGen.ar(2, ~genDef, test, 100, 0.5).sanitize;

sig;

});

Ndef(\schroeder).play;

);

![]() )

)