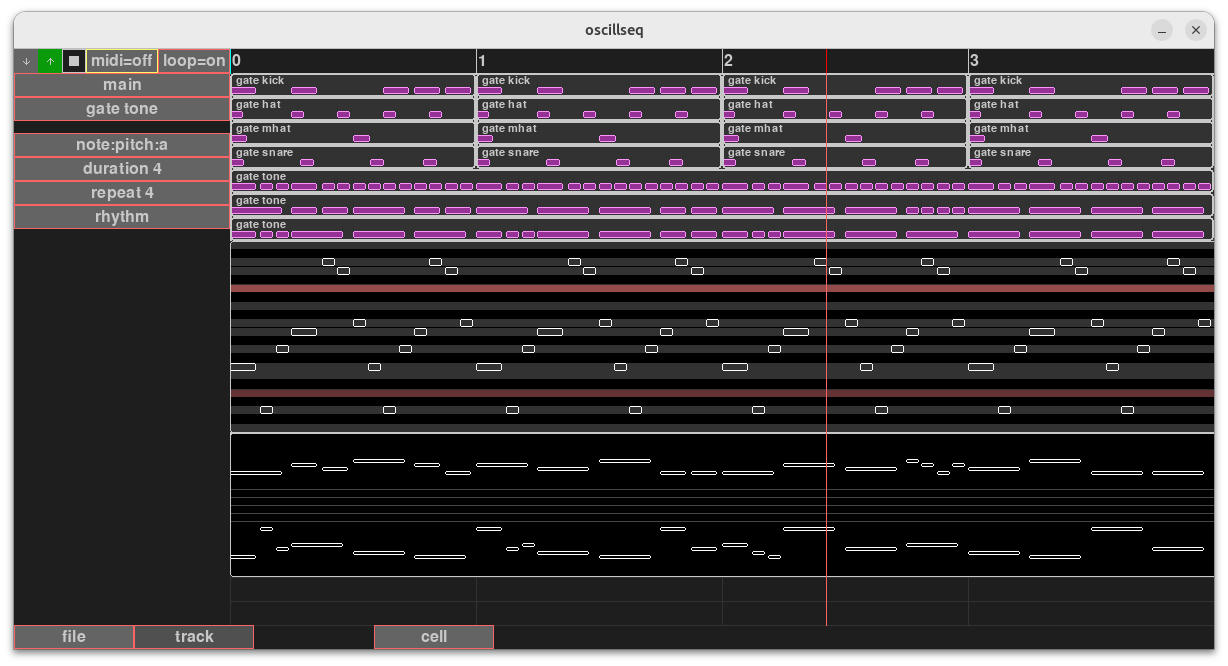

I’ve been wanting to make a personal music-creation software for a long while, and oscillseq is the current flagship. It looks like this right now. It’s incomplete and probably will always be like that:

I wonder where to take this? It’s a python-sequencer built on top of supriya project.

The interface is very incomplete but it’s running on top of a small source language that is fairly readable. I figured I do the things that matter with the GUI and use text format editing for things that matter less.

It’s fairly easy project to change and I wonder where to take it next. I’m thinking of including audio sampling support into it.

Below’s how the source language looks like. The major thing there are the sequencing commands and tracker data. Basically we start with rhythm and overlay that rhythm with control data that can be anything.

There’s a graphical node-editor included for wiring synths. It accepts annotated synthdefs.

I’d welcome any ideas really. Anything that could accomplish something neat with this. Also questions are welcome. One… the obvious question where it is and how to use it… Well the thing’s a swamp right now. But it’s been getting better. I dropped few dependencies recently and so far I think supriya is the only dependence that should be needed. Source is available in GitHub - cheery/oscillseq: OSC/Supercollider sequencer written in pygame

oscillseq aqua

drums {

0 0 gate kick 10100111 duration 1;

0 1 gate hat euclidean 5 16 repeat 1 duration 1;

0 2 gate mhat euclidean 2 12 repeat 1 duration 1;

0 3 gate snare euclidean 4 14 duration 1;

}

main {

0 0 clip drums;

1 0 clip drums;

2 0 clip drums;

3 0 clip drums;

0 4 gate tone e s s e s s s s s s s s s s repeat 4 duration 4 / c4 g3 d4 e4 c5 b4 f4 [note:pitch:a];

0 5 gate tone q e e q e e q q q e e q q q s s s s q q q q / c6 e6 d6 f6 e6 c6 e6 d6 f6 c6 c6 [note:pitch:b];

0 to 10 7 pianoroll a f3 d5;

0 to 10 15 staves b _._ 0;

0 6 gate tone e s s q q q e s s q q e e e s s q q q q q q q

/ c3 c4 e3 f3 d3 [note:pitch:b];

}

@synths

tone musical -122 -39 multi {

amplitude=0.1454

}

kick bd 71 -151 multi {

snappy=0.19, amp2=0.48, tone2=96.23

}

mhat MT 293 162 multi {

}

hat HT 464 159 multi {

}

snare sn 278 -148 multi {

snappy=0.19, amp2=0.1818, tone2=96.23

}

@connections

kick:out system:out,

tone:out system:out,

mhat:out system:out,

hat:out system:out,

snare:out system:out

``