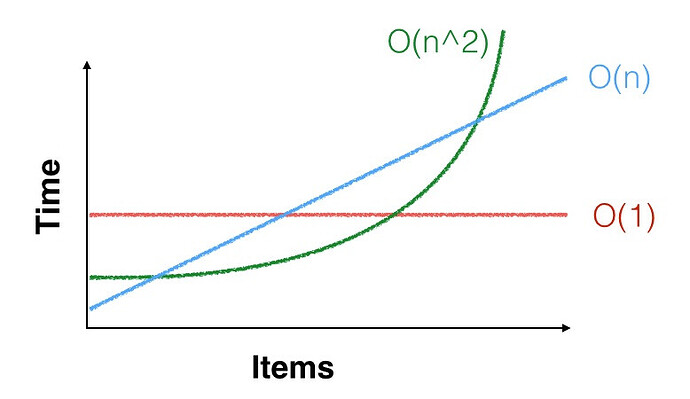

I checked out RingBuffer today. As I understand it, the point of using a circular buffer is speed, since there’s never any reshuffling of items. So I was surprised to find that the RingBuffer class is slower than doing the same operation the supposedly inefficient way:

//***********List

a=List.new

100.do{|i| a.add((hi: i))}

//writing:

(

var n = Main.elapsedTime;

a.add((hi: 99));

a.removeAt(0);

(Main.elapsedTime - n).postln;

)

//—> ~ 1 to 2 e-5 s

//reading:

(

var n = Main.elapsedTime;

a.do{|item|

item.hi = item.hi + 1;

};

(Main.elapsedTime - n).postln;

)

//—> ~ 1.5 e-4 s

//***********RingBuffer

a = RingBuffer.new(101); //need to initialize with one more than we want! so strange

100.do{|i| a.add((hi: i))}

(

var n = Main.elapsedTime;

a.overwrite((hi: 99));

(Main.elapsedTime - n).postln;

)

//—> ~ 2 to 4 e-5 s

//—> writing to RingBuffer is slower than doing it with a List!!!

//reading:

(

var n = Main.elapsedTime;

a.do{|item|

item.hi = item.hi + 1;

};

(Main.elapsedTime - n).postln;

)

//—> ~ 1.5 e-4 s

//—> reading RingBuffer is about the same as reading from the List

//testing that time measurement is functioning the way I think it is:

(

var n = Main.elapsedTime;

(Main.elapsedTime - n).postln;

)

//—> ~ 1 e-7

//so yes, works fine

Also, isn’t it strange that RingBuffer is able to hold one less than its initialization size number of items? I can’t understand why this would’ve been done this way.

I pointed out some issues in the help for RingBuffer here, too: